When Insurance Software Trials Run Too Long to Succeed

Extended POC trials feel safer but produce worse outcomes. Research shows trials beyond 3 months have 3x lower success rates. Learn why decision fatigue, not insufficient validation, causes most trial failures.

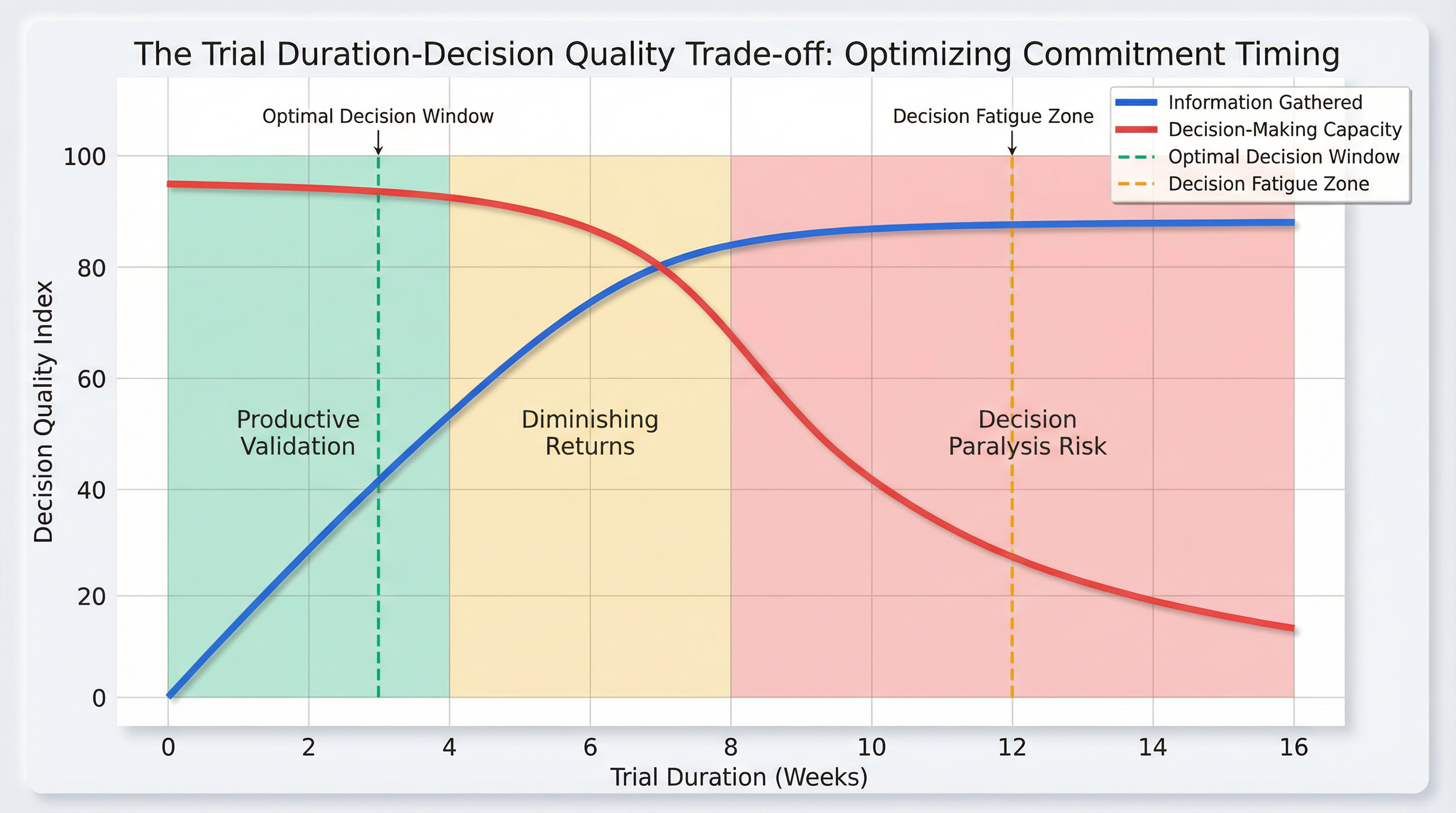

Most insurance buyers approach software trials with a straightforward assumption: more testing reduces risk. Spend an extra month validating edge cases, involve additional stakeholders, test one more integration scenario—each extension feels like due diligence. Yet enterprise data reveals a counterintuitive reality. Proof-of-concept trials lasting beyond three months convert to production deployments at rates three times lower than trials completed within that window. The problem isn't insufficient validation. It's that extended evaluation periods create cognitive conditions that make sound decisions nearly impossible.

The Mechanics of Trial Scope Expansion

Insurance software trials typically begin with focused objectives. A mid-market brokerage might start with a simple goal: validate that a comparison platform can accurately pull quotes from three carriers within their existing workflow. The initial test involves two underwriters, a handful of real policies, and a two-week timeline. Everyone agrees this scope makes sense.

By week three, the picture has changed. The operations director suggests testing the platform's customer portal since "we're already evaluating it anyway." The IT lead wants to verify API rate limits under load. A senior broker mentions that the platform claims to handle commercial auto differently and asks whether that's been validated. Each request sounds reasonable in isolation. None of these stakeholders is trying to derail the process—they're genuinely attempting to reduce risk by ensuring comprehensive evaluation.

This is where the first cognitive trap emerges. The team conflates thoroughness with effectiveness. In practice, extending trials to accommodate every stakeholder concern doesn't produce better decisions. Research tracking enterprise software purchases found that 59% of B2B buying processes end with no decision at all, not because the options were inadequate, but because the mental effort required to reach consensus became unsustainable. The evaluation process itself became the obstacle.

[Image blocked: Stages of Trial Scope Creep] The progression from focused validation to decision avoidance typically follows a predictable pattern, with each stage introducing additional cognitive load that makes final commitment progressively more difficult.

Why Information Accumulation Doesn't Equal Decision Quality

Insurance buyers operate under the belief that gathering more data improves decision-making. This assumption holds true only within a specific window. During the first two to four weeks of a trial, new information genuinely clarifies the picture. The team discovers how the software handles their specific policy types, whether integrations work as advertised, and whether the vendor's support responds adequately to technical questions.

After this initial period, something shifts. The rate at which new information changes the fundamental assessment begins to flatten. By week eight, most trials have answered the core questions that matter: Does this software solve our primary business problem? Can our team actually use it? Does the vendor deliver on their promises? Additional testing at this stage rarely reveals deal-breaking flaws that weren't apparent earlier. Instead, it uncovers edge cases, minor workflow variations, and hypothetical scenarios that may never materialize in production.

Meanwhile, decision-making capacity follows a different trajectory. The cognitive resources required to evaluate complex purchases—particularly those involving multiple stakeholders with competing priorities—deplete over time. After numerous meetings, feature comparisons, and internal debates, procurement teams experience what researchers term decision fatigue. This isn't a matter of laziness or lack of commitment. It's a documented psychological phenomenon where the quality of decisions deteriorates after extended periods of evaluation, regardless of how much additional information becomes available.

The intersection of these two curves creates a narrow window where teams have gathered sufficient information while still maintaining the cognitive capacity to act on it decisively. For most insurance software trials, this window closes somewhere between weeks two and four. Trials that extend beyond this point don't fail because they reveal disqualifying problems. They fail because the team's ability to process information and commit to a decision has eroded.

The Hidden Cost of Stakeholder Expansion

Insurance software purchases rarely involve a single decision-maker. The typical enterprise technology purchase now engages six to ten stakeholders, each bringing legitimate concerns tied to their functional area. A trial that begins with the operations team naturally expands to include IT security, finance, compliance, and various user groups. This expansion feels prudent—after all, these stakeholders will need to live with the chosen solution.

What buyers miss is how stakeholder proliferation compounds decision fatigue. Each additional voice doesn't just add another perspective; it multiplies the number of approval paths required for consensus. A trial involving three stakeholders requires managing three sets of priorities. A trial involving ten stakeholders requires navigating 45 potential pairwise disagreements, plus the challenge of aligning all ten simultaneously.

This mathematical reality explains why trials that start focused and time-bound often drift into months-long evaluation marathons. The original business need—perhaps a pressing requirement to improve quote turnaround times or reduce manual data entry—fades into the background as the process becomes consumed by the mechanics of stakeholder management. By week twelve, the team is no longer asking "Does this solve our problem?" They're asking "How do we get everyone to agree?" These are fundamentally different questions, and the latter rarely produces successful software implementations.

When Testing Becomes Risk Rather Than Risk Mitigation

Insurance buyers extend trials because they fear making the wrong choice. The logic seems airtight: if three months of testing is good, surely six months is better. This reasoning inverts at a certain point. Extended trials don't eliminate risk—they create it.

The first risk is opportunity cost. While an insurance brokerage spends month five debating whether a comparison platform handles a specific edge case correctly, competitors who committed earlier are already realizing value from their implementations. The business problem that justified the software search in the first place remains unsolved, and the gap between current state and desired state continues to widen.

The second risk is decision quality degradation. Teams that have spent months in evaluation mode often make final decisions in a rushed, compromised state. After exhausting their capacity for nuanced analysis, they either default to the lowest-price option (ignoring total cost of ownership), select based on a single vocal stakeholder's preference (bypassing the multi-factor analysis that justified the extended trial), or simply abandon the purchase entirely. None of these outcomes reflect better decision-making than a focused, time-bound evaluation would have produced.

The third risk is implementation timing. Software trials that conclude in month six or seven often face immediate pressure to compress implementation timelines to "make up for lost time." This compression introduces the very risks that the extended trial was meant to avoid—inadequate training, rushed data migration, and insufficient change management. The team ends up with both a prolonged evaluation and a hasty deployment, combining the worst aspects of both approaches.

[Image blocked: Trial Duration vs Decision Quality] Decision-making capacity deteriorates significantly faster than information value accumulates during extended trials, creating a narrow optimal window for commitment that most buyers miss by continuing to test.

Recognizing the Commitment Threshold

The challenge for insurance buyers isn't identifying whether a software trial has gathered enough information—it's recognizing when continued testing has crossed from productive validation into counterproductive delay. Several indicators signal this threshold.

The first is repetitive testing. When a trial enters its third month and stakeholders are still requesting tests of scenarios that are minor variations of previously validated functionality, the team has likely exhausted genuinely new information. At this stage, additional testing serves primarily to satisfy psychological comfort rather than reveal material new insights.

The second indicator is stakeholder turnover in trial participation. If the people actively engaged in week eight differ significantly from those involved in week two, the trial has lost its original decision-making context. New stakeholders naturally want to "catch up" by re-testing elements that earlier participants already validated, creating a cycle where the trial never actually concludes.

The third signal is the emergence of hypothetical scenario testing. Productive trials focus on validating how software performs against known, current business requirements. When teams start testing for "what if we expand into this new line of business" or "what if we double in size," they've shifted from evaluation into speculation. These scenarios may be worth considering during contract negotiations or implementation planning, but they're poor reasons to extend a trial.

The fourth indicator is meeting fatigue. When internal discussions about the trial shift from substantive feature evaluation to process management—debating who needs to be involved, what additional approvals are required, or how to structure the next round of testing—the cognitive resources needed for actual decision-making have been depleted.

The Alternative Approach

Insurance buyers who successfully navigate software trials without falling into extended evaluation traps tend to follow a different pattern. They establish clear success criteria before the trial begins, defining specifically what questions the trial must answer and what evidence would constitute satisfactory answers. These criteria aren't vague aspirations like "ensure it meets our needs." They're concrete statements: "Confirm the platform can generate accurate quotes for our top five policy types within our current workflow" or "Verify that claims data can be migrated without manual re-entry."

These buyers also set firm time boundaries and defend them. A two-week intensive trial with real data, actual users, and focused objectives reveals more about a software's viability than a three-month gentle exploration with sanitized test scenarios. The intensity forces both the buyer's team and the vendor to confront real problems immediately rather than deferring them with promises of future fixes.

Critically, successful buyers recognize that perfect information doesn't exist. Every software purchase involves some degree of uncertainty, and no amount of additional testing eliminates that entirely. The question isn't whether the chosen solution will be flawless—it won't be. The question is whether it solves the core business problem adequately enough to justify moving forward. This reframing shifts the evaluation from seeking perfection to assessing sufficiency, a significantly more achievable standard.

Making the Commitment Decision

The moment to commit arrives not when every possible question has been answered, but when the core questions have been addressed and the team still possesses the cognitive capacity to act decisively. For most insurance software trials, this moment occurs somewhere between weeks two and four. Buyers who recognize and act on this timing don't make riskier decisions—they make better ones, because they're deciding while their judgment remains sharp rather than after decision fatigue has set in.

This doesn't mean rushing evaluation or skipping important validation steps. It means designing trials to front-load the most critical testing, engaging the right stakeholders from the start rather than adding them incrementally, and establishing clear decision points rather than allowing evaluation to drift indefinitely. The broader evaluation process [blocked] for insurance software involves many considerations beyond the trial itself, but the trial's specific role is to validate whether the software performs as needed, not to achieve perfect certainty about every possible future scenario.

Insurance buyers who master this distinction—who can differentiate between productive validation and decision avoidance—make software commitments that actually reach production. They avoid the trap where 59% of enterprise purchases end in no decision, not because they're less thorough, but because they understand that thoroughness has a point of diminishing returns. They recognize that the evaluation process itself can become the primary risk factor if allowed to extend beyond the window where decision-making capacity remains intact.

The counterintuitive reality is that shorter, more intense trials produce better outcomes than extended, gentle evaluations. This isn't because less testing is inherently superior—it's because focused testing forces confrontation with real issues while the team still has the mental resources to process what they're learning and act on it. Extended trials feel safer in the moment, but the data consistently shows they lead to either no decision or compromised decisions made under conditions of cognitive depletion. The most effective risk mitigation strategy isn't more testing. It's recognizing when you have enough information to decide, and deciding before that window closes.